Wan2.2 - Text 2 Image Generation?

Using the Video Model for Image Generation

Reddit, along with a few other forums, has seen a lot of chatter recently about using the Wan2.2 video model for image generation, with claims it is better than Flux.1 Dev and Flux.1 Krea. I’ll admit I was sceptical but these comments intrigued me so I thought I would give it a quick try.

The easiest approach to creating a single image from Wan2.2 is just to use a normal video workflow and set the number of frames to one, however, there are some workflows appearing optimised for image generation rather than video. This Reddit thread has some good information and links.

The workflow I used has options to use Torch and Sage Attention as accelerators and these will need to be installed separately if you wish to use them. Sage Attention has been a tricky issue on Windows systems but I successfully installed it along with the Triton pre-requisite by referring to this Reddit thread. The script referenced for install on ComfyUI Desktop, however, has some issues. For example it refers to ‘ComfyUI’ rather than ‘ComfyUIDesktop’ for the directory location and the script also refers to ‘venv’ rather than ‘.venv’ used by ComfyUI Desktop. These issues stop the script from running correctly if you are using ComfyUI Desktop and I also had issues with it invoking the Python core.

I found it was easier to look through the script and manually run the steps with the correct references using the ComfyUI Desktop terminal, rather than troubleshoot the script errors. Hopefully the script will get updated as Sage Attention installation can be a real pain on Windows.

Wan2.2 is a hefty model so unless you have high-end hardware then using a quantised version is likely to be required. Wan2.2 actually consists of two models - a high noise and a low noise version, both of which are required. Using my 16GB VRAM card I could run the Q8 quantised versions happily with a step iteration time of 22 seconds. I also used the FusionX and Lightx2v acceleration LoRAs which reduced the number of steps to 4 (8 in total across the high and low noise models) to give me a full render time of around 170-200 seconds.

One of the positives about using Wan2.2 is that it can create a higher resolution latent image, if you have enough VRAM. I have successfully used a latent resolution of 1920 x 1536 on a 16GB VRAM card which reduces the need to upscale using Hi-Res Fix or Ultimate SD Upscaler etc.

As an initial test I reused some existing prompts I had used with Flux.1 Krea and the results were very different.

The first image is of the festival woman I created using Flux.1 Krea. Next to it is the Wan2.2 version using the same prompt. To me this image looks more like a still from a video rather than a photograph, which given the background of the model is perhaps to be expected. I think the composition is a little lacking and it doesn’t have the depth and feel of the Flux.1 Krea image. It almost looks like the subject has been pasted on top of the background. The subject does look natural and realistic, with good skin tone, although perhaps a bit too perfect.

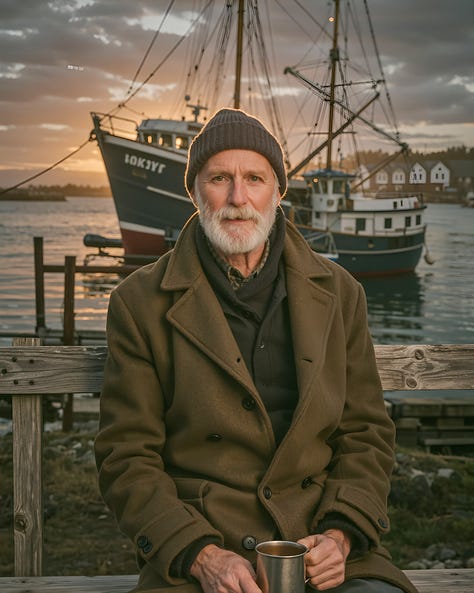

Next up an old fisherman. The first image is from Flux.1 Krea, the other two are Wan2.2. The first Wan2.2 image used the same prompt as Krea but I felt it didn’t do a good job with the prompt so I reverse engineered the Krea output into a detailed prompt and tried again creating the third image.

The Krea image has an artistic/cinematic style to it whereas the Wan2.2 images have a more standard photograph feel, and some may prefer that if that is the style they want, however, the subjects look pasted onto a backdrop. The lighting is very odd, like poor studio lighting.

The close up portrait of a punk woman baffled me as no matter what I did it kept creating a weird double head. I wondered if it was to do with the two different models which run separately but I haven’t seen this effect on other images. Ignoring the double head the image doesn’t have the style and impact of the Krea version, although as a ‘snapshot’ image it does look natural and real.

I thought I would try a realistic-style anime image, I didn’t have high expectations so I was really surprised by the output, especially considering I didn’t have a LoRA to use.

An attempt at a watercolour style image was also impressive, again there was no LoRA used. This one did take a few runs to get a sensible composition as the first couple had issues with the bench.

Clearly this is not exhaustive testing, but the testing I have done (and I haven’t included all the samples in the post) has given a mix of results some of which are very inconsistent or poor quality in terms of composition. I can see why people may like the style, especially for more casual images but for me it doesn't have the detail or quality of a ‘professional photograph’. I find the composition lacking, especially with the backgrounds, and often the foreground just doesn’t blend properly with the background. These issues are odd because as a video model it is very good so perhaps a different workflow or settings would help.

The surprise was the anime and watercolour images which I thought would be poor but actually looked very good, albeit I had issues with the composition so had to do several runs on the watercolour style image.

As I have commented before, saying one model is better than another can be rather subjective and it very much depends on what you want. Given the comments I had seen perhaps my expectation of what Wan2.2 could produce was too high.

That’s not to say Wan2.2 can’t produce good images, it can, and with a very different style to Flux but the low ‘good to bad ratio’ of output images makes it almost unusable for me, especially as it is quite slow even with the acceleration LoRAs. Maybe there is something I am missing that needs to be tweaked to really make it shine, or maybe it just isn’t a style that appeals to me.

For certain very specific cases I can see myself using it but given current limits around LoRAs (which may well change) and the way the workflow is constructed with the dual models, I can’t see it drawing me way from the Flux models or HiDream for pure images at the moment.